Texts as Data

Research using texts is perhaps the most defining feature of digital humanities work.

To understand texts, we’ll start by using one of the most widely studied collections of texts in the field: State of the Union messages given by U.S. Presidents. State of the Union Addresses are a useful baseline because they are openly available and free. The files that we use here are predominantly from the American Presidency project at the University of California: https://www.presidency.ucsb.edu/documents/presidential-documents-archive-guidebook/annual-messages-congress-the-state-the-union. 1. Speeches from 2015 and later were taken straight from the White House.

We’re also going to dig a little deeper into two important aspects of R we’ve ignored so far: functions (in the sense that you can write your own) and probability.

Loading texts into R

You can read in a text the same way you would a table. But the most basic way is to take a step down the complexity ladder and read it in as a series of lines.

As a reminder, you probably need to update the package:

If you type in “text,” you’ll see that we get the full 2015 State of the Union address, broken into multiple lines.

As structured here, the text is divided into paragraphs. For most of this text, we’re going to be interested instead in working with words.

Tokenization

Let’s pause and note a perplexing fact: although you probably think that you know what a word is, that conviction should grow shaky the more you think about it. “Field” and “fields” take up the same dictionary definition: is that one word or two?

In the field of corpus linguistics, the term “word” is generally dispensed with as too abstract in favor of the idea of a “token” or “type.”

Where a word is more abstract, a “type” is a concrete term used in actual language, and a “token” is the particular instance we’re interested in. The type-token distinction is used across many fields. Philosophers like Charles Saunders Pierce, who originated the term @williams_tokens_1936, use it to distinguish between abstract things (‘wizards’) and individual instances of the thing (‘Harry Potter.’) Numismatologist (scholars of coins) use it to characterize the hierarchy of coins: a single mint might produce many coins from the same mold, which is the ‘type’; each individual instance is a token of that type.

Breaking a piece of text into words is thus called “tokenization.” There are many ways to do it–coming up with creative tokenization methods will be helpful in the algorithms portion of this class. But the simplest is to simply to remove anything that isn’t a letter. Using regular expression syntax, the R function strsplit lets us do just this: split a string into pieces. We could use the regular expression [^A-Za-z] to say “split on anything that isn’t a letter between A and Z.” Note, for example, that this makes the word “Don’t” into two words.

You’ll notice that now each paragraph is broken off in a strange way: each paragraph shows up nested. this is because we’re now looking at data structures other than a data.frame. This can be useful: but doesn’t let us apply the rules of tidy analysis we’ve been working with. We’ve been working with tibble objects from the tidyverse. This individual word, though, can be turned into a column in a tibble by using tibble function to create it.

Now we can use the “tidytext” package to start to analyze the document.

The workhorse function in tidytext is unnest_tokens. It creates a new columns (here called ‘words’) from each of the individual ones in text. This is the same, conceptually, as the splitting above, but with a large number of hard-earned knowledge built in.

You’ll notice, immediately, that this looks a little different: each of the words is lowercased, and we’ve lost all punctuation.

The choices of tokenization

There are, in fact, at least 7 different choices you can make in a typical tokenization process. (I’m borrowing an ontology fromo Matthew Denny and Arthur Spirling, 2017.)

- Should words be lowercased?

- Should punctuation be removed?

- Should numbers be replaced by some placeholder?

- Should words be stemmed (also called lemmatization).

- Should bigrams or other multi-word phrase be used instead of or in addition to single word phrases?

- Should stopwords (the most common words) be removed?

- Should rare words be removed?

Any of these can be combined: there at least a hundred common ways to tokenize even the simplest dataset. Here are a few examples of the difference that can make, with code that shows the appropriate settings:

In any case, whatever definition of a word you use needs to have some use. So: What can we do with such a column put into a data.frame?

Wordcounts

First off, you should be able to see that the old combination of group_by, summarize, and n() allow us to create a count of words in the document.

This is perhaps the time to tell you that there is a shortcut in dplyr to do all of those at once: the count function. (A related function, add_count,

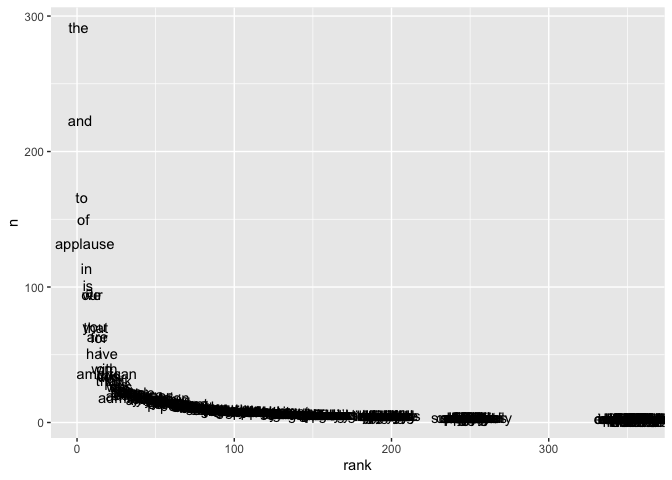

Using ggplot, we can plot the most frequent words.

Word counts and Zipf’s law.

This is an odd chart: all the data is clustered in the lower right-hand quadrant, so we can barely read any but the first ten words.

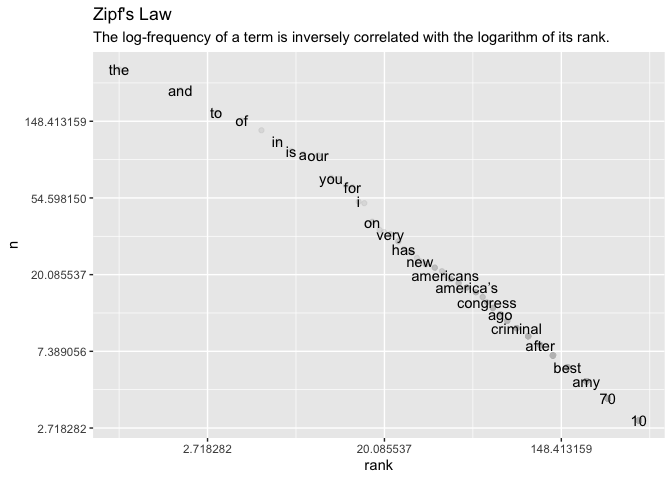

As always, you should experiment with multiple scales, and especially think about logarithms.

Putting logarithmic scales on both axes reveals something interesting about the way that data is structured; this turns into a straight line.

To put this formatlly, the logarithm of rank decreases linearily with the logarithm of count.

This is “Zipf’s law:” the phenomenon means that the most common word is twice as common as the second most common word, three times as common as the third most common word, four times as common as the fourth most common word, and so forth.

It is named after the linguist George Zipf, who first found the phenomenon while laboriously counting occurrences of individual words in Joyce’s Ulysses in 1935.

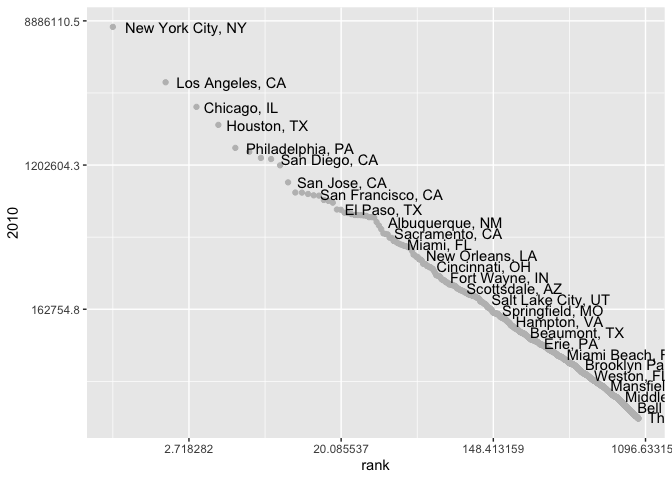

This is a core textual phenomenon, and one you must constantly keep in mind: common words are very common indeed, and logarithmic scales are more often appropriate for plotting than linear ones. This pattern results from many dynamic systems where the “rich get richer,” which characterizes all sorts of systems in the humanities. Consider, for one last time, our city population data.

It shows the same pattern. New York is about half again as large as LA, which is a third again as large as Chicago, which is 25% as large as Houston… and so forth down the line.

(Not every country shows this pattern; for instance, both Canada and Australia have two cities of comparable size at the top of their list. A pet theory of mine is that that is a result of the legacy of British colonialism; in some functional way, London may occupy the role of “largest city” in those two countries. The states of New Jersey and Connecticut, outside New York City, also lack a single dominant city.)

Concordances

This tibble can also build what used to be the effort of entire scholarly careers: a “concordance” that shows the usage of each individual in context. We do this by adding a second column to the frame which is not just the first word, but the second. dplyr includes lag and lead functions that let you combine the next element. You specify by how many positions you want a vector to “lag” or “lead” another one, and the two elements are offset against each other by one.

By using lag on a character vector, we can neatly align one series of text with the words that follow. By grouping on both words, we can use that to count bigrams:

Doing this several times gives us snippets of the text we can read across as well as down.

Using filter, we can see the context for just one particular word. This is a concordance, which lets you look at any word in context.

Functions

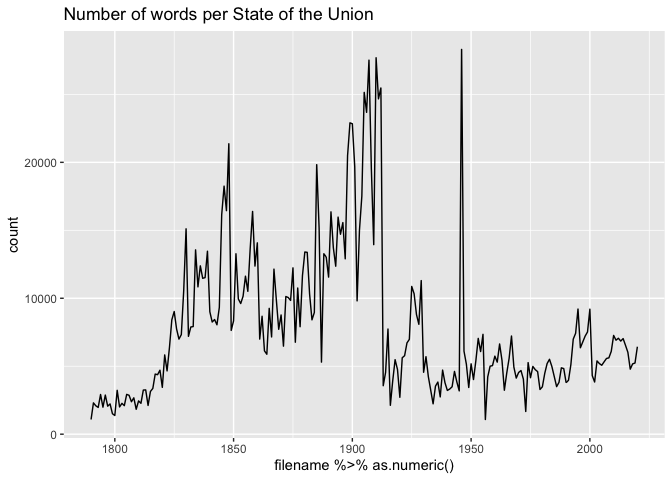

That’s just one State of the Union. How are we to read them all in?

We could obviously type all the code in, one at a time. But that’s bad pogramming!

To work with this, we’re going to finally discuss a core programming concepts: functions.

We’ve used functions, continuously: but whenever you’ve written up a useful batch of code, you can bundle it into a function that you can then reuse.

Here, for example, is a function that will read the state of the union address for any year.

Note that we add one more thing to the end–a column that identifies the year.

We also need to know all the names of the files! R has functions for this built in natively–you can do all sorts of file manipulation programatically, from downloading to renaming and even deleting files from your hard drive.

Now we have a list of State of the Unions and a function to read them in. How do we automate this procedure? There are several ways.

The most traditional way, dating back to the programming languages of the 1950s, would be to write a for loop. This is OK to do, and maybe you’ll see it at some point. But it’s not the approach we take in this class.

It’s worth knowing how to recognize this kind of code if you haven’t seen it before. But we aren’t using it here. Good R programmers almost never write a for-loop; instead, they use functions that abstract up a level from working on a single item at a time.

But if you’re working in–say–python, this is almost certainly how you’d do it.

One of the most basic ones is called “map”: it takes as an argument a list and a function, and applies the function to each element of the list. The word “map” can mean so many things that you could write an Abbot-and-Costello routine about it. It can mean cartography, it can mean a lookup dictionary, or it can mean a process of applying a function multiple times.

The tidyverse comes with a variety of special functions for performing mapping in this last sense, including one for combining dataframes by rows called map_dfr.

Metadata Joins

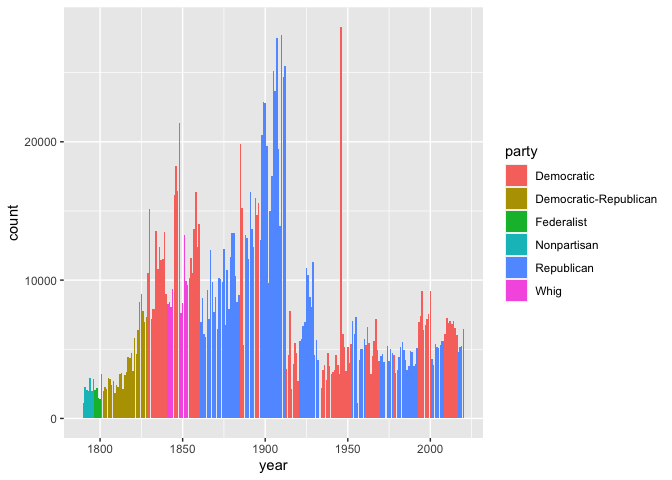

The metadata here is edited from the Programming Historian

This metadata has a field called ‘year’; we alreaday have a field called ‘filename’ that represents the same thing, except that the data type is wrong; it’s a number. So here we can fix that and do a join.

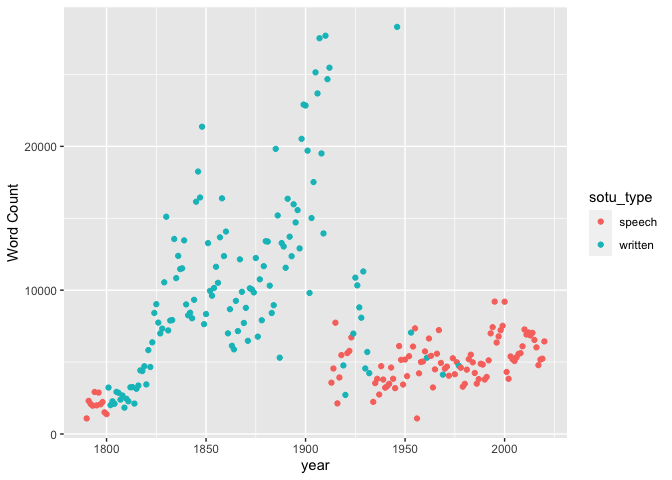

There’s an even more useful metadata field in here, though; whether the speech was written or delivered aloud.

One nice application is finding words that are unique to each individual author. We can do that to find words that only appeared in a single State of the Union. We’ll put in a quick lowercase function so as not to worry about capitalization.

Unique words are not the best way to make comparisons between texts: see the chapter on “advanced comparison” for a longer discussion.

Subword tokenization with sentencepiece

Some notes on integrating more complicated tokenization with tidytext in R

Tidytext works well in English, but for some languages the assumption that a “word” can be created through straightforward tokenization is more problematic. In language like Turkish or Finnish, agglutination (the composition of words through suffixes) is common; in languages like Latin or Russian, words may appear in many different forms; and in Chinese, text may not have any whitespace delineation at all.

“Sentencepiece” is one of several strategies for so-called “subword encoding” that have become popular in neural-network based natural language processing pipelines. It works by looking at the statistical properties of a set of texts to find linguistically common breakpoints.

You tell it how many individual tokens you want it to output, and it divides words up into portions. These portions may be as long as a full word or as short as a letter.

Especially common words will usually be preserved as they are. In the R implementation, sequences that start a word are prefixed with ▁: so, for instance, the most common word is “▁to” . This includes not just the word “to” but also “tough”, “tolerance”, and other things that start with “to”.

The number I give here, 4000 tokens, is fairly small. If you are doing this on a substantially sized corpus, you might want to go as high as 16,000 or more tokens. But the choice you make will undoubtedly be inflected by the language you’re working in, so above all, look at whether the segments you get seem to be occasionally useful.

Train a model, then tokenize

Sentencepiece doesn’t know ahead of time how it will split up words–instead, it looks at the words in your collection to determine a plan for splitting them up. This means you have an extra step–you must train your model on a list of files before actually doing the tokenization. Here, we pass it a list of all the files it should read. The model will be saved in the current directory if you want to reuse it later.

With the model saved, you can now tokenize a list of strings with the ‘sentencepiece_encode’ function. To use the model, it needs to be passed as the first argument to the function; but we can still use the same functions to put the words in a tibble and unnest them.

Probabilities and Markov chains.

Let’s look at probabilities, which lets us apply our merging and functional skills in a fun way.

This gives a set of probabilities. What words follow “United?”

We can use the weight argument to sample_n. If you run this several times, you’ll see that you get different results.

So now, consider how we combine this with joins. We can create a new column from a seed word, join it in against the transitions frame, and then use the function str_c–which pastes strings together– to create a new column holding our new text.

(Are you having fun yet?) Here’s what’s interesting. This gives us a column that’s called “text”; and we can select it, and tokenize it again.

Why is that useful? Because now we can combine this join and the wordcounts to keep doing the same process! We tokenize our text; take the very last token; and do the merge.

Try running this piece of code several different times.

You can do this until your fingers get tired: but we can also wrap it up as a function. In the tidyverse, the simplest functions start with a dot.

A more head-scratching way is to use a technique called “recursion.”

Here the function calls itself if it has more than one word left to add. This would be something a programming class would dig into; here just know that functions might call themselves!

State of the Union

Let’s review some visualization, filtering, and grouping functions using State of the Union Addresses. This initial block should get you started with state of the Union Addresses

- What is the first word of the 7th paragraph of the 1997 State of the Union Address?

- What was the first State of the Union address to mention “China?” (Remember that you may have made all words lowercase.)

- What context was that first use?

- What post-1912 spoke the most words in State of the Union addresses? (Note “spoke”; the filter here gets rid of the written reports.)

- What president had the shortest average speech? (Note: this requires two group_bys.)

- Make a plot of the data from the previous answer.

Joins

- Create a data frame with two columns: ‘total_words’ and ‘year’, giving the words per speech by year.

- Create a line chart for words related to the military.

- Do the same, but with a list of religious words. In a nutshell, what’s the history of uses of religious words in State of the Unions?

(Note: the words you use here matters! I still rue a generalization I helped someone make about this dataset on incomplete data. There’s one word, in particular, that will give you the wrong idea of the pre-1820 religious language if it is not included.)

Free Exercise

Fill a folder with text files of your own. Read them in, and plot the usage of some words by location, paragraph, or something else. Use a different foldername than “SOTUS” below: but do move it into your personal R folder.

Gerhard Peters and John T. Woolley. “The State of the Union, Background and Reference Table.” The American Presidency Project. Ed. John T. Woolley and Gerhard Peters. Santa Barbara, CA: University of California. 1999-2020. Available from the World Wide Web: https://www.presidency.ucsb.edu/node/324107/↩︎